Compare local.ai vs Ollama.ai ⚖️

local.ai has a rating of 0 based on 0 of ratings and Ollama.ai has a rating of 4 based on 4 of ratings. Compare the similarities and differences between software options with real user reviews focused on features, ease of use, customer service, and value for money.

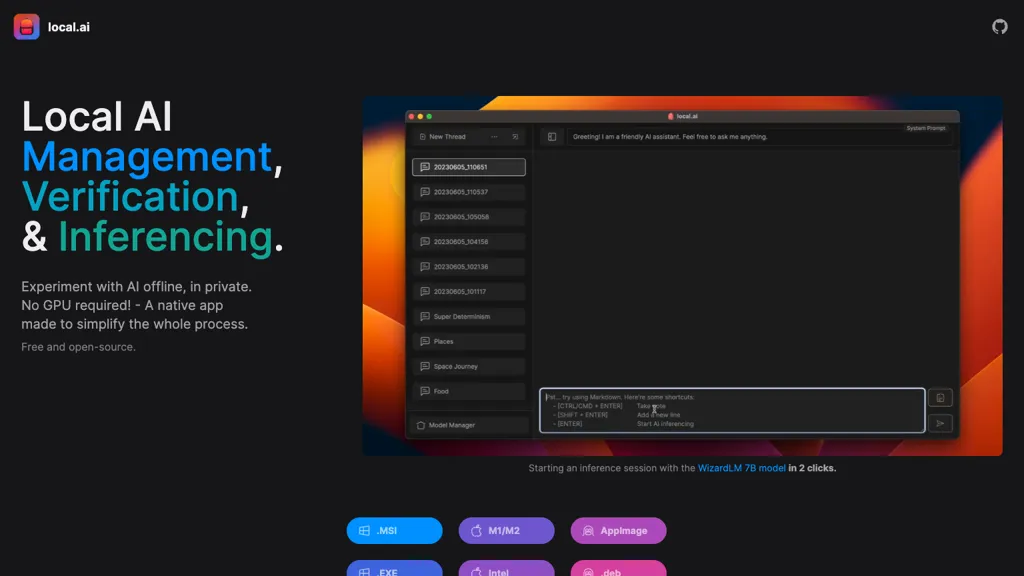

📝 local.ai Description

Local AI Playground by Local.ai is an innovative offline AI management tool. It features CPU inference, memory optimization, upcoming GPU support, browser compatibility, small footprint, and model authenticity assurance for versatile experimental use.

📝 Ollama.ai Description

Llama is a local AI tool that enables users to create customizable and efficient language models without relying on cloud-based platforms, available for download on MacOS, Windows, and Linux.

local.ai Key Features

✨ Local AI Playground for AI models management, and inferencing

✨ Support for CPU inferencing and adaptability to available threads

✨ Support for GPU inferencing and upcoming parallel session management features

✨ Memory efficiency in a compact size of less than 10MB for Mac M2, Windows, and Linux

✨ Digest verification for model integrity and inferencing server for quick AI inferencing

✨ Support for CPU inferencing and adaptability to available threads

✨ Support for GPU inferencing and upcoming parallel session management features

✨ Memory efficiency in a compact size of less than 10MB for Mac M2, Windows, and Linux

✨ Digest verification for model integrity and inferencing server for quick AI inferencing

Ollama.ai Key Features

✨ Customize language models

✨ Create language models

✨ Run large language models locally

✨ Control ai models privacy

✨ Download for macos, windows, and linux

✨ Create language models

✨ Run large language models locally

✨ Control ai models privacy

✨ Download for macos, windows, and linux

👍 local.ai Ratings

0 0 ratings👍 Ollama.ai Ratings

4 4 ratings

Value for money:

4.0

Ease of Use:

4.0

Performance:

4.0

Features:

4.0

Support:

4.0