Visit Prompt Token Counter's Site

What is Prompt Token Counter?

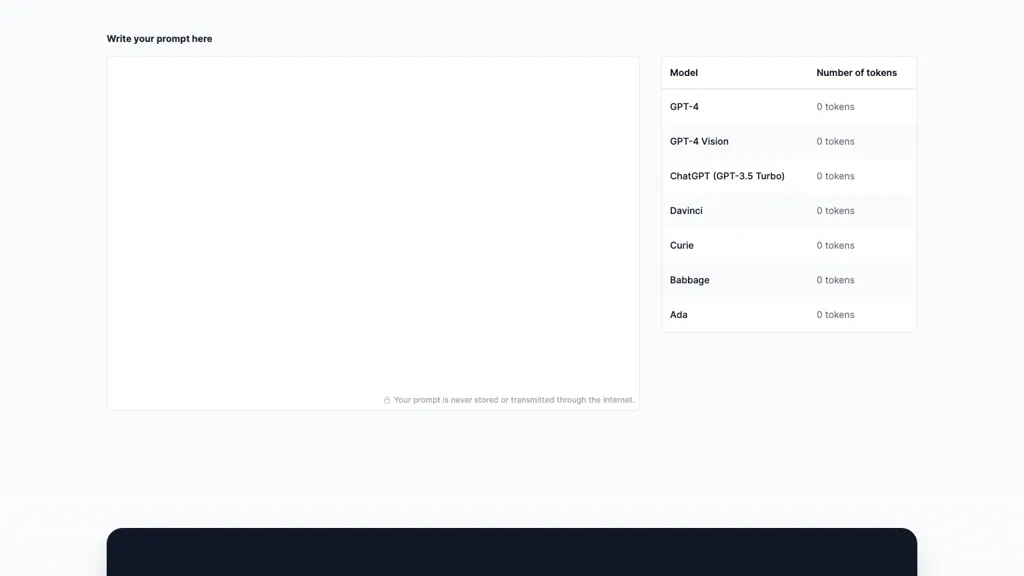

Token Counter for OpenAI Models

The Token Counter for OpenAI Models is an essential tool for working with language models like OpenAI's GPT-3.5 and ensuring interactions stay within token limits. By tracking and managing token usage in prompts and responses, users can optimize communication with the model, avoid exceeding token limits, manage costs effectively, and craft concise and effective prompts. The tool assists in pre-processing prompts, counting tokens, adjusting responses, and iteratively refining prompts to fit within the allowed token count. Understanding token limits, tokenizing prompts, and accounting for response tokens are key steps in efficiently managing interactions with OpenAI models.

⭐ Prompt Token Counter Core features

- ✔️ Token tracking

- ✔️ Token management

- ✔️ Prompt pre-processing

- ✔️ Token count adjustment

- ✔️ Efficient interaction management